A fighter is plane flying at 1000 kmph fires a few bullets which leaves the barrel at 2000 kmph, the plane then accelerates to 2000 kmph and catches the bullets back. Is this statement true of false?

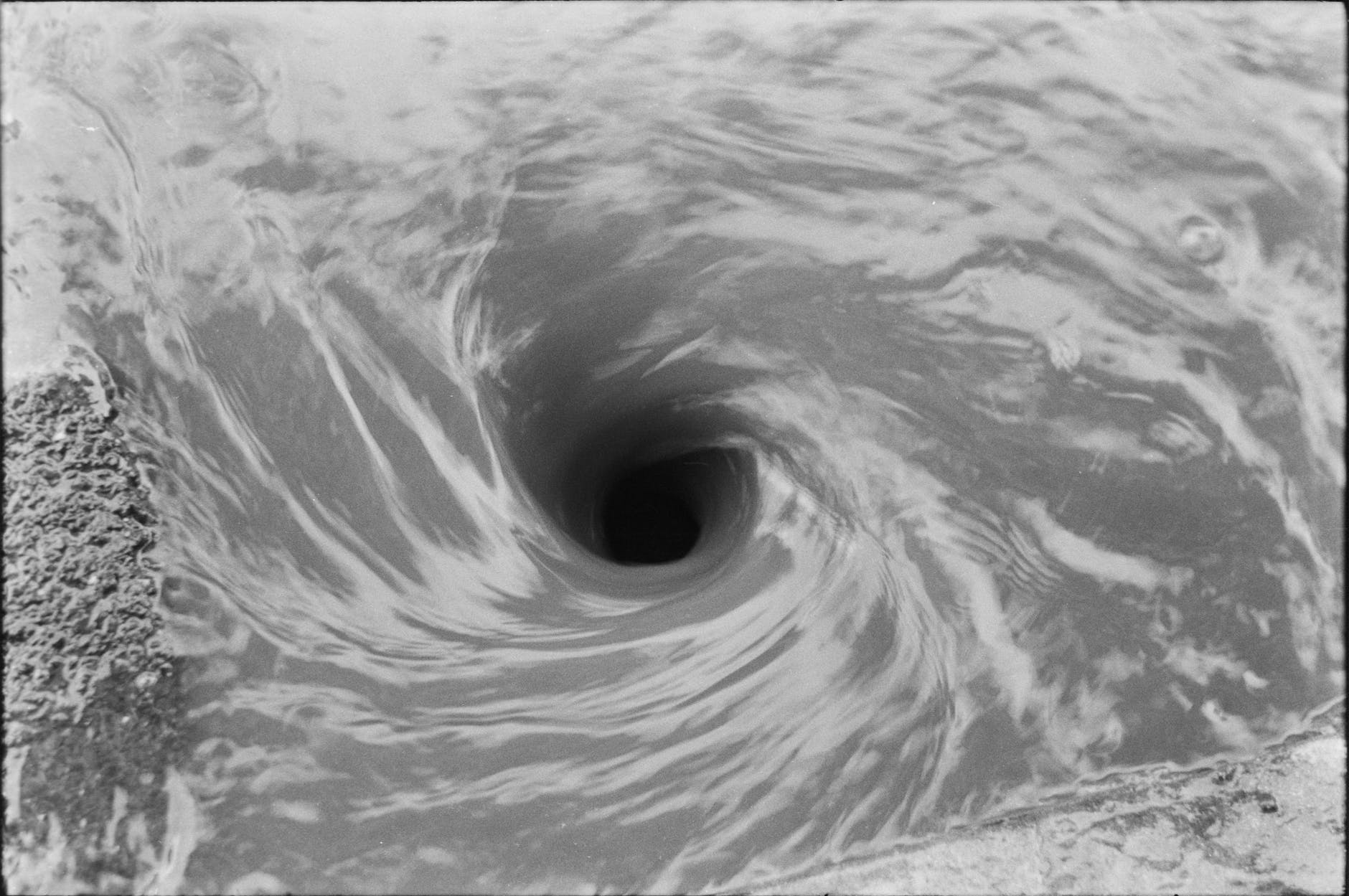

If the above question is asked to a primary school student who sees only the facts in the statement, the answer is likely a NO but a student who has understood physics and all the dynamics that are in play will certainly bring in air resistance, terminal velocity and acceleration into the equation and say it is possible. The reason why the plane can catch the bullets is – a bullet will be subjected to air resistance and lose its forward velocity and fall towards the ground, while doing, so without any external force it will reach terminal velocity because of more denser air towards the ground; the plane can dive faster and break terminal velocity because of its thrust and will eventually over take the bullets given this happens at a sufficient altitude. It happened in real so it is not a theoretical possibility, check this link.

Many of the professional management and executives in software development I observed belong to the static understanding of agility in software development. They are well versed and equipped with a lot of terms and concepts which in their mind are non negotiable and have to be followed to the dot. Examples – estimations using fibonacci numbers only, planning only for repeatable velocity iteration over iteration, enforcing only either solo or pair programming, locking main branches and allow only PR through gated reviews. The list goes on without addressing the nimbleness needed.

The management’s mindset is opposite to agile philosophy, they treat the development team like resources to be utilised and deal them as numbers. The best software teams are the ones that understand business and measurable results to back some decision, which will help them to continuously modify the software to facilitate business goals. If a business idea takes about a month or more to implement and test it out, more or less there isn’t any agility, it is just that people are convinced that they are doing the so called AGILE PROCESS. If we have the right ways of working (not process) then barring major platform work most of the idea to production cycles should be within weeks or days timescale, not in months.

What gives agility? Before we get to that, we should answer what kills agility. My take is, two things that kill agility are (1) professional management and (2) certifications/processes/frameworks claiming to be AGILE. Professional management is conventionally equipped to deal with industrial and manufacturing, they always start from there which is an efficiency oriented management style and adapt to an effectiveness oriented management style.

When people are dealing with software development as if it is a static system, things appear in black & white and easy to understand and predict. It is hardly the reality, software development is a complex dynamic system which inherently has to be driven by people on the ground than the management. The focus for management is to be a facilitator to ensure that the staffing is good, right tools in place, work environment is not toxic and remove the impedance for business knowledge & communication. As much as possible avoid the cargo cult way (a.k.a. some major agile framework) of managing software projects, understand the dynamics at play and plan based on that.

Some things that has worked so well for me

- Very good individual contributors who excelled at their work were better leaders as managers than professional managers from reputed schools

- Long running small and stable team compositions achieve big results compared to frequently churned or large teams. They may start slow but accelerate to a good velocity which sometimes is hard for the business to keep up

- Certifications and frameworks in the AGILE business do a lot more damage than help

- There is no substitute for XP practices, continuous delivery and engineering rigour

- Developers who understand business contribute multi-fold than business people who understand tech